$3M in research grants //

Rolling acceptances open

Building the benchmarks that define frontier AI

Open Benchmarks Grants funds researchers, labs, and engineers creating the open-source evaluation infrastructure that shapes how the next generation of AI systems are built and measured.

In partnership with

What we fund

We're looking for benchmarks that advance the fundamental measurement axes of agentic AI. Below are the categories we're actively seeking — but we welcome independent research directions too.

Agentic task completion

Multi-step task execution, tool use, and goal-directed behavior across real-world environments and interfaces.

Long-context reasoning

Evaluation of coherence, retrieval, and synthesis across extended contexts — documents, codebases, and conversations.

Multimodal evaluation

Benchmarks that assess understanding and reasoning across text, images, structured data, and code in combination.

Planning under uncertainty

Evaluation of sequential decision-making, backtracking, and robustness to ambiguous or incomplete specifications.

Reliability & safety

Measuring consistency, refusal calibration, adversarial robustness, and instruction-following under pressure.

Programmatic data quality

Benchmarks and tooling for assessing training dataset quality, annotation consistency, and labeling artifacts.

Independent directions are welcome. If your research addresses a critical evaluation gap not listed above, we want to hear from you. The steering committee reviews all proposals on their merits.

Is this for you?

We're looking for benchmarks that advance the fundamental measurement axes of agentic AI. Below are the categories we're actively seeking — but we welcome independent research directions too.

Strong candidates

- Academic researchers at any career stage, including PhD students and postdocs

- Independent researchers and lab teams working on open evaluation tooling

- Engineers building infrastructure for benchmark creation and reproducibility

- Teams with a concrete benchmark proposal and existing preliminary work

- International applicants — geography is no barrier

- Researchers willing to publish resulting datasets and code under open licenses

Not a fit

- Closed-source or proprietary benchmark development

- Work that primarily evaluates a single commercial model or product

- Benchmark proposals without a concrete methodology or scope

- Projects where Snorkel AI or its partners are the sole intended beneficiaries

IP & licensing. Grant recipients retain full IP ownership of their work. We require outputs to be published under an OSI-approved open license. Snorkel AI receives no exclusivity or commercial rights.

What you receive

Expert data credits

Access to Snorkel's expert annotation network — thousands of specialists across academic, professional, and domain-specific fields — to generate high-quality training and evaluation data at scale.

Research collaboration

Direct team access

Work directly with Snorkel and partner research teams. Steering committee members are available for advisory sessions. We don't just write a check — we're active collaborators.

Partner compute & platform

Prime Intellect · Hugging Face

Access to compute credits from Prime Intellect and platform credits from Hugging Face for hosting, running, and distributing your benchmarks and datasets.

Common questions

Things we've heard from researchers before they applied.

Yes, fully. Geography is not a consideration in our review process. Grants are available to researchers worldwide, subject to standard legal and compliance requirements for fund disbursement in your country.

How to apply

01

Submit your proposal

A brief application describing your benchmark, the evaluation gap it addresses, and your methodology. Two to four pages is sufficient. No templates required.

02

Steering committee review

Proposals are reviewed by our committee of academic and industry leaders. Shortlisted applicants are invited for a conversation with our advisory board.

03

Grant & collaboration kickoff

Selected teams receive their grant, expert data credits, and compute allocations. We agree on a research collaboration structure and publication timeline.

04

Publish & release

Recipients publish the resulting dataset, benchmark, or paper under an open license, with acknowledgement of Open Benchmark Grants support. We amplify the work across our network.

Rolling acceptances — no fixed deadline. Apply when you're ready.

Steering committee

Proposals are reviewed by a committee of researchers and engineers at the frontier of AI evaluation. They bring independent academic judgment — Snorkel does not direct their decisions.

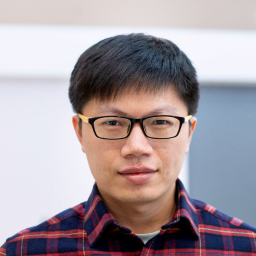

Karthik Narasimhan

Princeton University

Professor of Computer Science at Princeton. Research focuses on reinforcement learning for language and agentic systems.

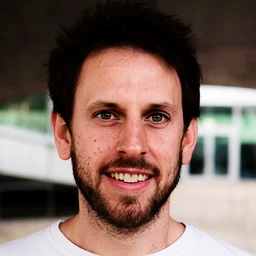

Chris Ré

Stanford University

Associate Professor at Stanford and co-founder of Snorkel AI. Pioneered programmatic data development for machine learning.

Ludwig Schmidt

Stanford University · LAION

Stanford researcher and LAION collaborator. Co-creator of CIFAR-10.1, WILDS, and several foundational evaluation benchmarks.

Yu Su

Ohio State University

Professor at Ohio State. Research spans conversational AI, question answering, and evaluation methodology for language models.

Lewis Tunstall

Hugging Face

Machine learning engineer at Hugging Face and co-author of Natural Language Processing with Transformers.

Fred Sala

Univ. of Wisconsin–Madison

Assistant Professor at Wisconsin–Madison. Research focuses on data-centric AI, weak supervision, and programmable training pipelines.