Funding the next wave of frontier benchmarks

Benchmarks that define and advance the frontier

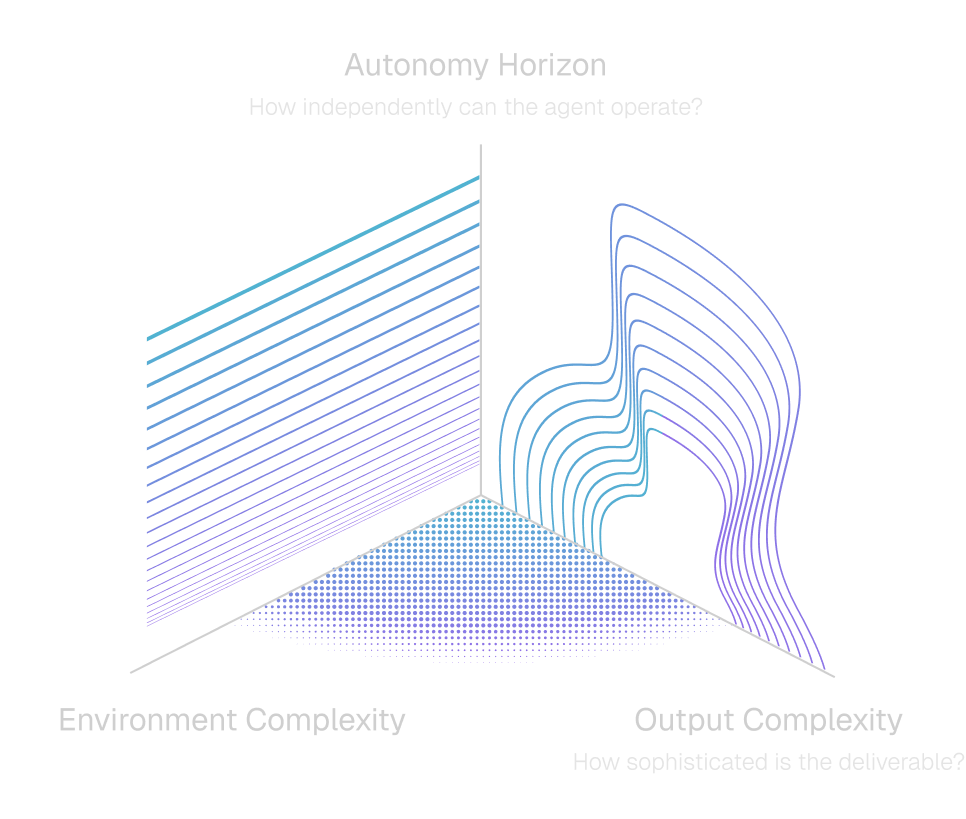

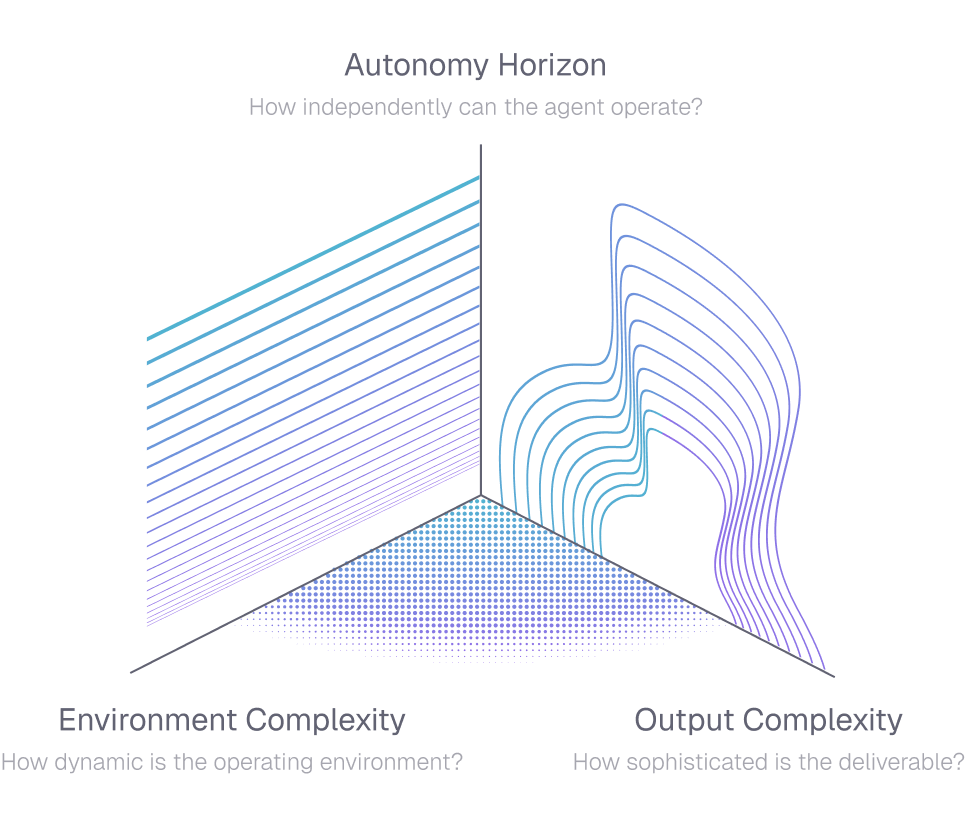

Our ability to measure AI has been outpaced by our ability to develop it, and this evaluation gap is one of the most important problems in AI. Looking ahead, Benchmarks must close the gap between what we measure and actually encounter, falling along three core dimensions: environment complexity, autonomy horizon, and output complexity.

Backed by a $3M commitment, the Open Benchmarks Grants program funds open-source datasets, benchmarks, and evaluation artifacts that shape how frontier AI systems are built and evaluated.

Call for proposals

We are seeking applications from researchers, labs, and engineers building benchmarks for the next wave of AI capabilities. We’re looking for benchmarks that drive the fundamental axes for AI agency (read more on our blog) and welcome independent directions from the research community as well.

How to apply

Apply

Selection

Launch

Publication

Steering committee

Karthik Narasimhan

Chris Ré

Ludwig Schmidt

Yu Su

Lewis Tunstall